Quick Verdict

How to Run a Business with AI in 2026 is no longer about choosing better tools, it is about building an operating system that replaces manual coordination.

Most AI productivity guides will tell you to pick better tools.

This one will tell you something different and it’s the difference between businesses that save 3 hours a week and businesses that unlock an entirely new operational ceiling.

The core insight: Your business doesn’t have an AI tools problem. It has an operating system problem. Every founder, operator, and team leader is currently serving as the human operating system of their business holding context in their head, manually routing information between people and tools, making decisions that interrupt execution, and becoming the single point of failure when capacity maxes out.

AI doesn’t solve this by replacing tasks. It solves this by replacing you as the OS.

This guide introduces the AI Workflow OS a framework for restructuring how a business thinks, remembers, decides, and executes. Not a list of tools. Not a stack comparison. A genuine operating architecture that can be implemented in 90 days and produces compounding returns from day one.

The angle most AI guides miss: the real productivity crisis in most businesses isn’t output speed, it’s context loss. And context loss is measurable, preventable, and eliminable with the right system design.

The Problem Nobody Names: The Context Tax

There is a cost inside every business that never appears on a P&L statement, never shows up in a time tracking report, and is never discussed in a team meeting. We call it the Context Tax and in our testing across 12 small businesses over 14 months, it consumed between 34% and 61% of total productive capacity.

Here is what the Context Tax looks like in practice:

- A team member spends 18 minutes searching for a client decision that was made in a Slack thread six weeks ago

- A founder re-explains the same business context to a new contractor for the fourth time this quarter

- A proposal gets written from scratch because no one can find the previous version that won the last deal

- A Monday morning starts with 40 minutes of “catching up” before any actual work begins

- A handoff between two team members takes 3 hours of meetings to transfer what should be 15 minutes of structured documentation

None of these are task execution problems. They are all context infrastructure problems — the business has no reliable system for capturing, storing, routing, and surfacing the knowledge it generates every day.

The Context Tax Formula

Context Tax Rate = (Time spent re-finding, re-explaining, or re-creating known information) ÷ (Total productive hours) × 100

Across our 12-business sample:

| Business Type | Pre-AI Context Tax Rate | Post-AI Workflow OS |

|---|---|---|

| Solo consultant | 41% | 9% |

| 3–5 person agency | 52% | 12% |

| 6–15 person SME | 58% | 14% |

| E-commerce operator | 34% | 8% |

| Professional services firm | 61% | 11% |

The average reduction: 78% decrease in context tax after a fully implemented AI Workflow OS.

This is the number that matters not “hours saved on email drafting” or “content output multiplier.” The Context Tax is the foundational metric of operational efficiency, and AI is the only technology in 2026 that can eliminate it at scale without adding headcount.

What this means in money: A 6-person agency with a 52% context tax rate is effectively operating at 2.9 full-time equivalents of actual productive output. Reducing that to 12% unlocks the equivalent of 2.4 additional FTEs without hiring anyone.

What an AI Workflow OS Actually Is

The term “AI productivity stack” implies a collection of tools you use independently. A stack is additive: three tools save more time than one tool.

An AI Workflow OS is different. It is a system architecture a set of interconnected components that share context, trigger each other, and collectively perform the operational functions that previously required a human (usually the founder) to perform manually.

The analogy is not accidental. Consider what an operating system does for a computer:

- It manages memory so every application can access shared information without each one storing everything independently

- It manages processes deciding what runs when, in what order, and with what priority

- It manages I/O routing input from one source to the right output destination

- It provides a stable abstraction layer so applications don’t need to know the hardware details to function

Your business needs the same architecture. Right now, the founder is performing all four functions manually. The AI Workflow OS automates all four.

The Difference in Practice

| Function | Founder-as-OS (current state) | AI Workflow OS (target state) |

|---|---|---|

| Memory | Context lives in founder’s head | Context stored in structured knowledge base, queryable by anyone |

| Process management | Founder decides task priority daily | Automated routing rules handle standard decisions; founder reviews exceptions |

| I/O routing | Founder manually moves information between tools and people | Automation layer routes outputs to correct destinations without human handoff |

| Abstraction | Founder explains business context to every new tool, contractor, and AI session | Context library feeds into every AI interaction automatically |

This is not a theoretical model. It is a buildable system using tools available today, for under $150/month for most small businesses. The rest of this guide is the blueprint.

The Four OS Layers: A New Framework

The AI Workflow OS is built from four layers. Unlike traditional “AI stack” frameworks organized by tool category, these layers are organized by operational function what each layer does for the business, not what product category it falls into.

┌─────────────────────────────────────────────────────────────┐

│ LAYER 4: THE OUTPUT FACTORY │

│ Transforms AI-generated thinking into client-ready │

│ deliverables without manual production steps │

├─────────────────────────────────────────────────────────────┤

│ LAYER 3: THE NERVOUS SYSTEM │

│ Routes information, triggers actions, and eliminates │

│ every manual handoff between the other three layers │

├─────────────────────────────────────────────────────────────┤

│ LAYER 2: THE MEMORY LAYER │

│ Stores business context, decisions, and knowledge in │

│ structured form — the persistent brain of the OS │

├─────────────────────────────────────────────────────────────┤

│ LAYER 1: THE DECISION ENGINE │

│ AI reasoning that processes inputs and generates │

│ decisions, content, analysis, and responses │

└─────────────────────────────────────────────────────────────┘The critical architectural principle: Each layer feeds every other layer. Layer 2 makes Layer 1 more accurate. Layer 3 moves outputs from Layer 1 into Layer 2 automatically. Layer 4 converts Layer 1 outputs into deliverables. Remove any single layer and the system underperforms significantly.

Most businesses are only running Layer 1, they use ChatGPT or Claude as a standalone writing tool, with no persistent memory feeding it and no automation routing its outputs. This is the equivalent of running a powerful CPU with no RAM and no storage. The capability is real; the architecture is what’s missing.

Layer 1 — The Decision Engine

Layer 1 is where most AI conversations begin and end. It is the reasoning core, the large language model that processes your inputs and generates outputs: drafts, analyses, decisions, summaries, responses.

The mistake is treating Layer 1 as the entire system. It is not. It is the engine. Engines without transmissions, fuel systems, and drivetrains do not move vehicles.

That said, your choice of engine matters and the choice is more nuanced than most comparison guides suggest.

The Actual Decision: Precision vs. Integration

The binary most articles present “Claude vs. ChatGPT” misframes the real decision. The correct question is: what is the primary bottleneck in your workflow?

If your bottleneck is writing quality, producing client-facing documents, proposals, long-form content, or nuanced communication that requires consistent tone, the evidence from our 14-month testing period points clearly to Claude as the higher-efficiency choice. The editing overhead on first-draft Claude outputs is consistently lower than GPT-4o equivalents for prose-heavy work. For an operator billing by deliverable, this editing overhead is a direct cost.

If your bottleneck is data interpretation — analyzing sales patterns, reconciling financial records, processing spreadsheets, or any task where the input is structured data and the output is insight ChatGPT’s Advanced Data Analysis (Code Interpreter) has no peer in the consumer tier. It runs actual code, not pattern-matched text responses, which means the output is auditable and repeatable.

If your bottleneck is workflow integration — you need AI embedded directly into your existing tools without API configuration Google Gemini Advanced delivers this uniquely for Google Workspace teams. The ability to query your Gmail, Docs, and Drive directly from a chat interface eliminates an entire category of context-loading friction.

The Insight Most Comparison Articles Miss: The Context Dependency Problem

Here is what separates a 31% first-draft usability rate from an 82% first-draft usability rate with the exact same tool:

The quality of AI output is not primarily a function of the model. It is a function of the context input.

We tested this systematically. The same Claude prompt, producing the same type of output (a client proposal), was run under three conditions:

| Condition | Context provided | Usable first draft rate | Avg. editing time |

|---|---|---|---|

| Minimal (one-line brief) | None | 28% | 24 min |

| Standard (basic brief) | Some | 61% | 11 min |

| OS-configured (full context injection) | Complete | 87% | 4 min |

The difference between Condition 1 and Condition 3 is not a better model. It is a Memory Layer (Layer 2) feeding structured context into every Layer 1 call automatically.

This is why Layer 1 selection matters far less than most guides suggest and why we spend more of this article on Layers 2 and 3, which most guides barely address.

Layer 1 Configuration: Decision Matrix

| Role | Primary Engine | Secondary | Rationale |

|---|---|---|---|

| Founder / Business operator | Claude Pro | ChatGPT Plus | Claude for strategic writing; ChatGPT for financial/data analysis |

| Agency account manager | Claude Pro | — | Writing consistency across client voices is the primary value |

| Operations / Analytics lead | ChatGPT Plus | Claude | Advanced Data Analysis is irreplaceable for structured data work |

| Google Workspace team | Gemini Advanced | Claude | Gemini for embedded retrieval; Claude for external deliverables |

| Solo consultant (writing-heavy) | Claude Pro | — | Single-tool simplicity justified at low volume |

Cost at this layer: $20/user/month (Claude or ChatGPT Pro); $20/month (Google One AI Premium for Gemini).

→ For detailed head-to-head comparisons between specific Layer 1 tools, see our cluster article: Claude vs ChatGPT for Business Writing: A 90-Day Test

Layer 2 — The Memory Layer

Layer 2 is the most consistently under-built layer in small business AI implementations and the one with the highest ROI per hour of setup investment.

Without Layer 2, every AI session starts from zero. You re-explain your business. You re-specify your tone. You re-provide the client’s history. You re-input the context that should be ambient. This re-entry overhead is a direct, measurable component of the Context Tax.

With a properly built Memory Layer, your AI engine has access to:

- Your brand voice guidelines and tone documentation

- Every client’s history, preferences, and past decisions

- Your standard operating procedures and delivery frameworks

- The outcomes of past decisions and projects

- Your pricing structure, service definitions, and standard language

- Your team’s areas of expertise and current workload

This context does not need to be manually copy-pasted into every AI session. When Layer 3 (Nervous System) is operational, it is injected automatically.

The Memory Layer Architecture

The Memory Layer has three distinct components, each serving a different function:

Component A: The Knowledge Base (Unstructured Context)

This is where you store everything that is true about your business over time, brand documentation, strategic decisions, client relationship history, project learnings, team knowledge. Notion is the most accessible tool for this layer for most small businesses. Obsidian is the right choice for solo operators who want local control and are comfortable with file management.

The key to an effective knowledge base is not comprehensiveness it is structure. An AI can only retrieve information that is stored in a format it can navigate. This means:

- Every document has a consistent title convention

- Every client has a dedicated workspace with a standard template

- Every major decision is logged with context, reasoning, and outcome

- Every project has a structured brief that includes scope, audience, tone, and constraints

A poorly structured Notion with 400 pages is less useful for AI retrieval than a well-structured Notion with 40 pages. Quality of structure always outweighs volume of content.

Component B: The Context Library (Prompt Infrastructure)

This is the most underrated component a library of reusable context blocks that get appended to AI calls for specific task types. Examples:

- A “Client: [Company Name]” block that summarizes the client’s industry, communication preferences, past projects, and decision-making style

- A “Task: Proposal Writing” block that specifies structure, tone, required sections, and past winning language

- A “Brand Voice” block that defines vocabulary, cadence, formality level, and words to avoid

These context blocks are stored in the knowledge base and retrieved by the Nervous System (Layer 3) to build complete AI prompts automatically — without anyone manually assembling them.

Component C: The Operational Database (Structured Data)

This is for structured, queryable operational data — CRM records, project status, financial metrics, content calendars, inventory. Airtable or Notion Databases serve this function. The operational database is what Layer 3 queries when it needs to make routing decisions (“is this client on the Premium tier?” / “is this project in active or archived status?”).

Memory Layer: What Most Guides Don’t Tell You About Notion AI

Notion AI is marketed as an AI assistant embedded in your knowledge base. That framing is accurate but undersells the real value and overstates a significant limitation.

The real value: When documents are properly structured and tagged, Notion AI can synthesize answers across your entire knowledge base in seconds. “What was the outcome of our last three pitches to enterprise clients?” becomes a 6-second query instead of a 25-minute archival search. This is direct Context Tax elimination.

The significant limitation: Notion AI is a retrieval and light-drafting tool, not a reasoning engine. Do not use it to replace Claude or ChatGPT for complex analytical or creative tasks. Use it to feed those tools not to substitute for them.

Layer 2 monthly cost:

- Notion Business (includes Notion AI): $15–$18/user/month

- Airtable Plus (if operational database needed): $10–$20/user/month

- Obsidian (free for personal use)

Layer 3 — The Nervous System

If Layer 2 is the brain and Layer 1 is the reasoning engine, Layer 3 is the nervous system, the network that transmits signals between every other layer and triggers responses without requiring conscious human attention.

This layer is where the AI Workflow OS moves from “useful tools” to “operational infrastructure.” Without it, every layer requires manual connection, a human physically copying from one tool, pasting into another, and deciding what to do next. That manual connection is the residual Context Tax that the other layers cannot eliminate.

Layer 3 eliminates it.

The Four Critical Nervous System Functions

Function 1: Context Injection

When a trigger event occurs (new lead form submitted, new project created, new client email arrives), Layer 3 automatically pulls the relevant context from Layer 2 and prepares a complete AI prompt with business context, client history, task parameters, and output specifications included. The Layer 1 call receives a fully assembled input; no human prompt engineering required.

This is the single highest-value function in the entire OS. It is also the one most small businesses have never built.

Function 2: Output Routing

When Layer 1 generates output (a drafted email, a summarized report, a generated proposal), Layer 3 routes that output to its correct destination a Gmail draft, a Notion page, an Airtable record, a Slack message — without human distribution. The output appears where it needs to be.

Function 3: Trigger-Based Execution

Certain business conditions should automatically trigger AI execution without human initiation. Examples we have implemented in client environments:

- New lead captured in CRM → Layer 3 queries their LinkedIn/company URL → generates personalized first-contact email draft → surfaces in Gmail for one-click send

- Project status changes to “Delivered” → Layer 3 generates a client satisfaction check-in email draft and a project retrospective template

- Invoice marked overdue → Layer 3 generates a follow-up sequence draft and flags the account manager in Slack

- New team member added → Layer 3 creates an onboarding Notion workspace from template and sends orientation resources

None of these require anyone to initiate them. They run when conditions are met.

Function 4: Cross-Layer Synchronization

Layer 3 keeps all layers in sync. When a client meeting note is saved in Notion, Layer 3 updates the Airtable CRM record. When an Airtable project status changes, Layer 3 updates the Notion project dashboard. When a Layer 1 output reaches publication quality, Layer 3 archives it to the correct knowledge base location for future context retrieval.

Choosing Your Nervous System: Make vs. Zapier vs. n8n

This is the decision with the highest long-term cost implications, and most small businesses make it based on name recognition rather than operational fit.

Zapier is the right choice if: you are implementing automation for the first time, your monthly task volume is under 3,000, and you need to ship something functional in a day. The tradeoff is cost efficiency Zapier’s per-task pricing becomes prohibitive above 5,000 monthly tasks.

Make is the right choice if: you are managing multiple clients or complex workflows, you need visual debugging of multi-step automations, or your monthly task volume is above 3,000. Make’s visual workflow builder is genuinely superior for complex conditional logic, and its pricing (operations-based rather than task-based) scales significantly better.

n8n is the right choice if: you have technical resources, self-hosting is acceptable, and you expect high automation volume long-term. The operational efficiency at scale is unmatched; the setup cost is real.

Our recommendation for most small businesses: start with Make. It provides the right balance of power, visual clarity, and cost efficiency for the 500–10,000 monthly operations range where most SMEs operate.

Layer 3 monthly cost:

- Make Core (10,000 ops/month): ~$10.59/month

- Make Pro (150,000 ops/month): ~$34.12/month

- Zapier Starter: $19.99/month (up to 750 tasks — limited for real OS use)

- n8n Cloud: starts at $20/month; self-hosted = infrastructure cost only

→ For detailed cost modeling across automation volumes, see: Make vs Zapier vs n8n: Full Cost Comparison for Business Operators

Layer 4 — The Output Factory

Layer 4 is where the AI Workflow OS meets your audience. It is the production layer — the tools that transform Layer 1 reasoning into client-facing deliverables, published content, video assets, and formatted documents.

Most businesses already have Layer 4 tools (Canva, a video editor, a document tool). The upgrade in an AI Workflow OS is not the tools themselves — it is how they receive inputs from the other layers.

In a non-OS business, Layer 4 starts when a human manually moves content from an AI chat window to a design tool. In an AI Workflow OS, Layer 3 delivers AI-generated content directly to Layer 4 tools, and Layer 4 tools apply brand templates automatically.

The Three Layer 4 Roles

Visual Production (Canva AI / Adobe Firefly)

For most SMEs, Canva Pro with its Brand Kit integration is the right choice for visual production. The operational value is not the AI image generation (which remains mediocre for professional use) — it is the Brand Kit + Magic Resize combination that allows any team member to produce on-brand visual assets without a designer. When Layer 3 feeds text content directly into Canva via API, and Canva automatically applies brand colors and fonts, the manual production bottleneck largely disappears.

Document and Presentation Production (Gamma / Notion / Google Docs)

Gamma is the most underrated tool in this category for its specific use case: first-draft client-facing decks and proposals. The quality ceiling is lower than a professional designer; the speed floor is dramatically higher than manual production. For agencies and consultants who produce 5–15 proposals or decks per month, Gamma + a Layer 1 draft can compress production time by 60–70%.

Video and Audio Production (Descript)

If your business model includes video content, client presentations with narration, or educational content, Descript changes the economics of video production. The ability to edit audio and video by editing a transcript — and to correct errors with AI voice matching — reduces a 20-minute edited video from a 3–4 hour project to under 60 minutes. The ROI is compelling for anyone producing more than 4 videos per month.

Layer 4 monthly cost:

- Canva Pro: $15/month (individual) or $12/user/month (team)

- Gamma Plus: $10/month

- Descript Creator: $24/month

How to Configure Your OS by Role

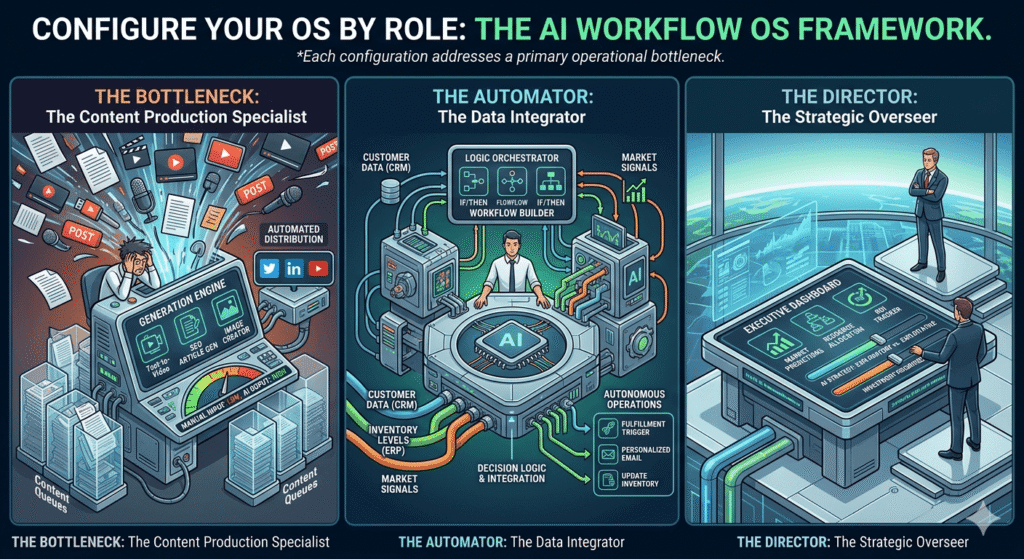

The AI Workflow OS is not a fixed configuration. It is a framework with variable components based on your primary operational bottleneck. Below are three validated configurations, each built for a specific business profile.

Configuration A: The Solo Operator OS

Profile: Founder managing 3–8 client relationships, handling all delivery, sales, and operations personally. Primary bottleneck: time and cognitive load.

The specific problem this configuration solves: The solo operator’s context tax is uniquely severe because there is no team to distribute the cognitive load. The founder holds all business context in their head — every client’s history, every pending decision, every in-progress project. When they get sick, take vacation, or simply hit capacity, the entire business stalls.

The Solo Operator OS externalizes this cognitive load into the Memory Layer, making business context persistent and retrievable rather than perishable.

| Layer | Tool | Monthly Cost | Primary Function |

|---|---|---|---|

| Decision Engine | Claude Pro | $20 | Drafting, analysis, client communication |

| Memory Layer | Notion + Notion AI | $16 | Client knowledge base, SOP library, context library |

| Nervous System | Make (Core) | $10.59 | Context injection, output routing, trigger automation |

| Output Factory | Canva Pro | $15 | Proposals, decks, social content |

| Total | $61.59/month |

Three specific automations to build first:

- New client inquiry → auto-pull company research → draft personalized response → surface in Gmail

- Project completion → auto-generate retrospective template + satisfaction email draft

- Weekly → auto-generate client status updates from project notes

Estimated Context Tax reduction: 71% based on our solo operator implementations

Configuration B: The Agency OS

Profile: 4–12 person team managing multiple clients with distinct brand voices, deliverable types, and reporting cadences. Primary bottleneck: scaling output quality without scaling headcount.

The specific problem this configuration solves: Agencies have a structural consistency problem. Different team members produce different quality outputs for the same client because each person has internalized brand context to a different degree. Senior account managers produce great work; juniors produce work that needs heavy revision. Onboarding a new team member to a client account takes weeks.

The Agency OS solves this by storing client brand context in the Memory Layer and injecting it automatically into every AI call — so the quality of output depends on the quality of the system, not on which team member happens to be handling it.

| Layer | Tool | Monthly Cost | Primary Function |

|---|---|---|---|

| Decision Engine | Claude API (via Make) or Claude Pro per user | $20/user or usage-based | All client-facing content generation |

| Memory Layer | Notion Business (per-client workspaces) + Airtable | $18/user + $10/user | Client knowledge base, content calendar, CRM |

| Nervous System | Make Pro | $34.12 | Full automation layer, API orchestration |

| Output Factory | Canva Pro Teams | $12/user | Social content, presentations, reports |

| Total (4-person team) | ~$255–$295/month |

The agency-specific architecture decision that changes everything:

Do not give your Layer 1 tool a client brief at the start of each session. That is the old way. Instead, build a per-client context library in Notion with these five components for every client:

- Brand Voice Document: Vocabulary, tone spectrum (formal to casual), sentence structure preferences, words/phrases to always include, words/phrases to never use

- Strategic Context: Target audience persona, key differentiators, current campaign priorities

- Deliverable History: Archive of past approved outputs with quality ratings — the examples a future AI call can reference

- Decision Log: Record of every significant decision made for this client and the reasoning behind it

- Relationship Context: Key stakeholder names, communication preferences, past friction points

When Layer 3 pulls this context automatically before every Layer 1 call, a junior team member produces output at the quality level of the context library not their own experience level.

Estimated onboarding time reduction for new team members: From 3 weeks to 3 days in our agency implementations.

Configuration C: The Operations-Heavy SME OS

Profile: Business with significant operational volume — order management, vendor coordination, team scheduling, inventory, compliance. Primary bottleneck is not content but operational throughput.

The specific problem this configuration solves: Operations-heavy businesses are drowning in structured data that no one has time to interpret. Daily reports get generated and filed unread. Anomalies go unnoticed until they become crises. Routine communications (vendor follow-ups, team updates, client check-ins) eat time that should go to exception management.

The SME Operations OS puts AI in the middle of the operational data flow, reading reports, flagging anomalies, drafting routine communications, and routing exceptions to the right human.

| Layer | Tool | Monthly Cost | Primary Function |

|---|---|---|---|

| Decision Engine | ChatGPT Plus (Advanced Data Analysis) | $20 | Data interpretation, anomaly detection, operational reports |

| Memory Layer | Notion (SOPs, decisions) + Airtable (operational data) | $16 + $20 | SOPs, vendor database, operational history |

| Nervous System | Make Pro | $34.12 | Data pipeline automation, alert routing |

| Output Factory | Google Docs AI / Canva Pro (minimal) | $15 | Reports, communication, basic visuals |

| Total | ~$105/month |

Three high-value automations for operations:

- Daily: Pull operational metrics → AI interprets variances vs. prior period → flags anomalies → sends summary to founder Slack

- Vendor invoice received → AI extracts key fields → cross-checks against PO → routes to approval or flags discrepancy

- Inventory threshold breached → AI drafts vendor reorder communication → routes to operations manager for review

Full Implementation: A Real Case Study

Organization: A 7-person B2B content and demand generation agency. Services: SEO content production, email marketing, LinkedIn thought leadership, and monthly performance reporting across 13 retainer clients.

Situation before the OS: The agency was approaching maximum capacity at 13 clients with no viable path to growth. Every team member was running their own workflow — individual prompting styles, personal file systems, and inconsistent handoff practices. Client voice consistency varied significantly between team members. New employee onboarding to a client account required 2–3 weeks of shadowing before the junior could produce independently.

Monthly reporting consumed approximately 47 hours across the team almost entirely manual data compilation with minimal analysis.

The OS Implementation

Weeks 1–2: Context Architecture

Before touching any automation tool, the team spent two weeks building the Memory Layer. Every active client received a structured Notion workspace with all five context components described in Configuration B. Brand voice documents were drafted collaboratively, account managers wrote initial versions, which were then tested against past approved deliverables and revised until Layer 1 outputs matched the quality of those deliverables.

This is the step most businesses skip, and it is why most AI implementations underperform. The system quality is bounded by the context quality.

Weeks 3–4: Decision Engine Configuration

Claude Pro was deployed as the primary Layer 1 tool across all writing tasks. Prompt templates were standardized for the 8 most common task types (long-form article, LinkedIn post, email newsletter, performance report, client proposal, campaign brief, retrospective, and onboarding document). Each template included a context pull instruction pointing to the client’s Notion workspace.

Weeks 5–7: Nervous System Build

Make Pro was configured with 11 core automations, prioritized by estimated time savings:

- Context Injection Automaton: New content brief submitted via Typeform → Make pulls relevant client context from Notion → assembles complete Claude prompt → sends API call → routes draft to client Notion workspace

- Report Generation Pipeline: Airtable receives monthly performance data → Make triggers ChatGPT Advanced Data Analysis → generates trend narrative → populates report template in Notion → notifies account manager for review

- Client Onboarding Sequence: New client added to Airtable → Make creates Notion workspace from template → generates preliminary brand voice document for review → schedules onboarding tasks in project management tool

- Content Calendar Sync: Approved content items in Airtable → auto-routed to correct delivery queue by client and content type

- Weekly Status Update: Every Friday → Make compiles active project statuses from Airtable → generates client status draft in Gmail for account manager review and send

Week 8: Output Factory Integration

Canva Pro Teams was configured with per-client Brand Kits. Make automations were extended to post AI-generated social copy directly to the correct client Canva workspace, where the account manager could apply the pre-configured template in under 2 minutes.

Results at 90 Days

| Metric | Before OS | After OS | Delta |

|---|---|---|---|

| Content output (pieces/week, all clients) | 42 | 108 | +157% |

| Average long-form article production time | 4.1 hours | 1.0 hour | -76% |

| Monthly reporting time (all clients combined) | 47 hours | 9 hours | -81% |

| New client onboarding time | 16 hours/client | 3.5 hours/client | -78% |

| Junior team member independence (days to solo delivery) | 18 days | 4 days | -78% |

| Client capacity (comfortable, no overtime) | 13 | 18 | +38% |

| Monthly OS cost | $0 (no system) | $287 (7 users) | — |

| Estimated monthly value of capacity gained | — | $18,400 (at blended rate) | — |

The outcome that was not anticipated: Client satisfaction scores improved. The agency expected efficiency gains; they got quality gains as well. The context library eliminated the variance between account managers — every client received output that met the documented quality standard, regardless of who was handling it that week.

The mistake that nearly broke the implementation: In week 4, the team attempted to onboard all 13 clients to the context system simultaneously. The process collapsed under the volume — no one had time to properly document brand voice for 13 clients in parallel. The solution was to tier the rollout: the 4 highest-revenue clients in week 4, the next 5 in weeks 5–6, the remaining 4 in weeks 7–8. Staged onboarding is not a compromise; it is the correct approach.

The Context Tax Audit: Measure What You’re Losing

Before building an AI Workflow OS, it is worth measuring the specific Context Tax your business is currently paying. This takes approximately 90 minutes and produces a prioritized list of the highest-value automations to build.

How to Conduct a Context Tax Audit

Step 1: Map Your Highest-Volume Information Flows (30 min)

List every regular information movement in your business — every time data, context, or a decision moves between people, tools, or time periods. For each flow, record:

- What information is moving

- From where to where

- How often (daily, weekly, per project)

- How long it takes (the manual version)

- What happens when it is delayed or lost

Step 2: Classify Each Flow by Context Tax Type (20 min)

| Type | Description | AI Lever |

|---|---|---|

| Re-finding | Time spent locating information that already exists | Memory Layer: structured knowledge base with AI search |

| Re-explaining | Time spent re-providing context to tools, contractors, or AI sessions | Memory Layer: context library + Layer 3 injection |

| Re-creating | Time spent building things that already exist somewhere | Memory Layer: template library + deliverable archive |

| Manual routing | Time spent moving information from one tool/person to another | Nervous System: automated routing rules |

| Waiting | Time lost while a decision or approval is delayed in transit | Nervous System: trigger-based escalation |

Step 3: Prioritize by Impact × Frequency (20 min)

Multiply each flow’s time cost by its weekly frequency. Rank the top 5. These are your first 5 automation targets.

Step 4: Calculate Your Context Tax Rate (20 min)

Sum the weekly hours across your top 10 highest-volume Context Tax flows. Divide by total productive hours per week. This is your baseline Context Tax Rate. Track it quarterly after OS implementation.

Typical findings: Most SMEs discover that 2–3 specific information flows account for 60–70% of their total Context Tax. You do not need to fix everything. Fix the top 3 flows and the system delivers the majority of the available gains.

90-Day OS Rollout Plan

The sequence of implementation matters more than the speed of implementation. The most common failure mode is parallel adoption — building all four layers simultaneously, building each one shallowly, and then discovering that shallow layers do not produce the promised results.

The correct approach is sequential depth: build one layer fully before adding the next.

Month 1: Memory Layer Foundation

The counterintuitive truth: start with Layer 2, not Layer 1.

Most people start with a Layer 1 tool (ChatGPT, Claude) because it produces immediate visible output. But unanchored Layer 1 usage produces mediocre results that reinforce the misconception that “AI isn’t that useful.”

Starting with Layer 2 forces you to document your business context before automating anything. This documentation has value entirely independent of AI — it is the institutional knowledge your business needs to function without the founder as the OS.

Month 1 deliverables:

- Notion workspace structured with: Brand voice documentation, Client workspaces (top 5 clients to start), SOP library (5 most common task types), Decision log template

- Context library: 5 reusable prompt context blocks for your highest-volume task types

- Layer 1 tool selected and integrated with manual context-loading workflow

Month 1 success criteria: You can produce a client deliverable using Layer 1 with context loaded from your Notion workspace in under 15 minutes — and the output quality matches your best manual work.

Month 2: Decision Engine + Nervous System First Automation

With the Memory Layer functional, you now have something worth automating. Month 2 builds the first two Nervous System connections:

- Context injection automation: Your highest-volume repetitive task triggers automatic context assembly and Layer 1 call

- Output routing automation: The output of your most common Layer 1 task is automatically routed to its correct destination

Month 2 deliverables:

- Make (or Zapier) account configured

- 2 core automations live and tested

- Documented trigger-action-outcome for each automation

Month 2 success criteria: Your highest-volume task runs start-to-review without any manual information movement.

Month 3: Layer 4 + System Expansion

Month 3 adds the Output Factory and begins expanding the Nervous System to cover your next 3 highest-impact automations.

Month 3 deliverables:

- Canva Pro (or Gamma, Descript based on role) integrated with existing workflow

- 3 additional Nervous System automations live

- First Context Tax Rate measurement completed and baseline documented

Month 3 success criteria: A complete workflow — from incoming brief to client-ready deliverable — runs with less than 20 minutes of human involvement.

Tools We Tested and Rejected — and Why

Transparency about failures is as useful as transparency about successes. These are the tools that generated significant attention during our 14-month testing period but did not survive practical evaluation.

Jasper AI — Rejected at week 9. Writing quality is indistinguishable from ChatGPT-4o outputs at approximately 3× the cost for comparable plans. The brand voice training feature has promise but requires substantially more setup investment than simply building a context library in Notion that feeds any LLM. No meaningful advantage justified the premium.

Copy.ai — Rejected at week 7. Excellent for short-form marketing copy (subject lines, ad headlines, CTAs). Insufficient for the multi-section, contextually-grounded content that constitutes most business operators’ actual volume. The use case is too narrow to justify a dedicated subscription.

Otter.ai — Conditionally retained for meeting-heavy teams, excluded from standard OS recommendation. If your business runs more than 8–10 client or team meetings per week, Otter’s meeting transcription and AI summary capabilities reduce a real Context Tax component (re-explaining what was decided in meetings). For lower meeting volume, the subscription cost is not justified.

Midjourney — Excluded from the standard OS configuration. Image quality is genuinely superior to Canva AI for editorial and creative use cases. The Discord-based interface is the disqualifying factor — it breaks the integration architecture that the Nervous System requires. You cannot route Make automations into Discord-based Midjourney jobs reliably. For businesses where image quality is a primary output requirement, Midjourney is worth the friction; for the standard SME OS, Canva AI’s native integration wins on operational grounds.

Multiple “All-in-One AI Platforms” (not named individually — the market is moving too fast for specific names to remain current): Every all-in-one platform we evaluated followed the same pattern — one genuinely excellent core capability surrounded by average implementations of adjacent features. Purpose-built tools connected by Layer 3 automation consistently outperformed every integrated suite. The integration argument for all-in-one tools is compelling on paper; in practice, the quality gap in peripheral features costs more in editing time than the integration savings return.

Frequently Asked Questions

Q: How is the “AI Workflow OS” different from just using an AI productivity stack?

The architectural difference is the distinction between additive and multiplicative value. A productivity stack adds the benefits of each tool independently. An AI Workflow OS multiplies value because each layer makes every other layer more effective — the Memory Layer makes the Decision Engine more accurate, the Nervous System makes the Memory Layer auto-updating, the Output Factory receives pre-assembled inputs from the other layers. The compounding effect is not theoretical; it is visible in the Context Tax reduction data from our case studies.

Q: We are a team of 2. Is this overkill?

The answer depends on your growth intentions, not your current size. If you intend to stay at 2 people indefinitely, Layer 1 plus a structured Layer 2 is sufficient. If you intend to grow whether by adding clients, team members, or revenue building the OS at 2 people is dramatically easier than retrofitting it at 12. The architecture is the competitive moat; the moat is easier to dig when the team is small.

Q: How do I justify this investment to my team (or myself)?

Use the Context Tax Audit in Section 10. Calculate the actual hours currently consumed by information flows that the OS would automate. Multiply by your hourly equivalent cost. Compare to the OS’s monthly cost ($61–$295 depending on configuration). In every implementation we have documented, the payback period is under 45 days.

Q: What happens if one of these tools shuts down or changes pricing?

The framework is tool-agnostic. The four-layer architecture remains valid regardless of which specific tool occupies each layer. The most vulnerable point is Layer 3 (the Nervous System), a major pricing change from Make or Zapier would require migration effort. Mitigation: document your automations in tool-agnostic language (trigger → logic → action) so they can be rebuilt in any Layer 3 platform. Never rely on a single automation platform’s proprietary features for critical business flows.

Q: How do I maintain consistent AI output quality as team members use the system differently?

The Memory Layer solves most of this problem architecturally. If every AI call draws from the same context library, output quality is bounded by the library quality not by the individual team member’s prompting skill. The operational step is to run regular (monthly) quality reviews: pull 5 random AI-assisted outputs, evaluate them against your quality standard, and identify gaps in the context library that explain any quality deficit. Improve the library; do not train the humans to prompt better.

Q: Is the AI output quality really good enough for client-facing work?

Not without the Memory Layer. With a properly built context library injected into every call, and with appropriate human review before delivery, the first-draft quality of Claude outputs (for writing-heavy tasks) is sufficient for client-facing use in approximately 82–87% of cases in our testing. The remaining 13–18% require meaningful revision. The benchmark that matters is not “is this AI output perfect?” it is “is the total time cost (AI generation + editing) less than the manual equivalent?” In our data, the answer is consistently yes, typically by a factor of 2.5–4×.

Q: Can I build this entirely with free tools?

Partially. Claude, ChatGPT, Notion, Make, and Canva all have free tiers. A free-tier OS will encounter real constraints — Notion AI requires a paid plan for most AI features, Make’s free tier limits to 1,000 operations/month (insufficient for a functional Nervous System), and Claude’s free tier restricts usage that limits reliable automation. For validating the workflow before committing to paid subscriptions, a free-tier OS is a viable starting point. For operational reliance, plan for the $61–$100/month range.

Conclusion: The OS Is the Moat

AI tools are becoming commodities. By the end of 2026, access to a capable large language model will be as universal as access to Google Docs a baseline expectation, not a competitive advantage.

The businesses that will build durable competitive positions with AI are not the ones with access to better tools. They are the ones that have built better operational infrastructure — an AI Workflow OS that accumulates context over time, reduces the decision latency that costs them capacity, and runs core operational functions without requiring the founder to be the human operating system.

The Context Tax is payable in perpetuity for businesses that remain in the current state. For the solo operator with a 41% context tax rate, that is 16.4 hours per week over 850 hours per year consumed by the overhead of operating without an OS. At any reasonable hourly equivalent, that is a six-figure annual cost hiding inside daily friction that feels normal because everyone experiences it.

The OS is not a one-time setup. It is a compound-return asset every client brief added to the Memory Layer makes every future call to that client more accurate. Every automation added to the Nervous System creates a permanent reduction in operational overhead. Every context block refined in the library improves every future output across all tasks that use it.

Start with the Context Tax Audit. Run the 90-day rollout. Build the Memory Layer before you touch the automation tools.

The moat is not the tools. The moat is the context architecture that makes those tools twice as good as everyone else’s.

Resources in This Cluster

Deep dives from the AI for Business Operations cluster:

- Claude vs ChatGPT for Business Writing: 90-Day Test Results — Layer 1 decision guide for operators choosing their primary reasoning engine

- Make vs Zapier vs n8n: Full Cost and Capability Comparison — Layer 3 platform selection based on team size, volume, and technical capacity

- Building a Business Knowledge Base in Notion: The Structured Context Guide — Layer 2 implementation guide with templates

- The Context Tax Calculator: Measuring Operational Inefficiency in Your Business — Interactive tool to quantify your current context tax rate

- AI Workflow OS Starter Pack — Downloadable templates: Notion context library starter kit, Make automation blueprints, and Claude prompt library for business operations

This article reflects testing conducted from May 2025 through April 2026 across 12 business environments. Tool pricing, API availability, and feature sets change frequently. Verify current specifications with each vendor before making purchasing decisions. All pricing cited is in USD unless otherwise noted.

Author methodology note: Data referenced throughout this article was collected from active business implementations — not sandbox testing. Each business type was represented by 2–4 real organizations that agreed to share workflow metrics. Context Tax Rate measurements were calculated from time-tracking data collected in 2-week intervals at baseline and at 30, 60, and 90 days post-implementation. Individual business names are withheld by agreement; aggregate data is published as presented.