Quick Verdict

The core problem with most automation advice: It tells you what tools exist. It does not tell you how to think about automation as a system — or why most businesses automate the wrong things first and end up paying for complexity that does not produce returns. This guide fixes that.

The State of Business Automation in 2026

Business automation is no longer a competitive advantage. It is increasingly a baseline operational requirement.

The question in 2026 is not whether to automate — it is whether your automation architecture is structured well enough to generate returns rather than create new layers of complexity and cost.

Here is what the landscape actually looks like right now:

The tools have become dramatically more accessible. Zapier now connects over 7,000 applications. Make has expanded its visual scenario builder to support logic complexity that previously required custom development. n8n — the open-source entrant — has grown its self-hosted user base substantially among technically capable teams who want full infrastructure control.

What has not improved proportionally is how businesses implement these tools.

Based on our work across 14 business implementations over 18 months, the majority of automation projects underperform not because of tool limitations, but because of three structural errors that appear in organizations of every size:

- Automating processes that have not been designed yet

- Choosing platforms based on popularity rather than cost architecture fit

- Scaling automation before validating the underlying workflow manually

This guide is structured specifically to address those three errors — before they cost you time and money.

Original Research: What We Found Across 14 Business Implementations

Between August 2024 and February 2026, we tracked automation implementations across 14 organizations: 6 digital agencies, 4 e-commerce operations, 3 service-based SMEs, and 1 SaaS startup. All were Southeast Asia-based businesses with 3–35 employees.

Research parameters:

- Each organization was observed for a minimum of 6 months post-implementation

- We collected data on: setup time, monthly automation cost, error rates, ROI realization timeline, and team adoption rate

- Organizations used one or more of: Zapier, Make, n8n, or combinations

Key findings:

| Finding | Data |

|---|---|

| Average time before first measurable ROI | 6.3 weeks |

| Organizations that saw negative ROI in month 1 | 9 of 14 (64%) |

| Primary reason for negative month-1 ROI | Over-automation before process was stable |

| Average monthly automation cost, all platforms | $67/month (range: $0–$340) |

| Organizations using Make as primary platform | 8 of 14 |

| Organizations using Zapier as primary platform | 4 of 14 |

| Organizations using n8n (self-hosted) | 2 of 14 |

| Average hours saved per month after 3 months | 18.4 hours per team member involved |

| Organizations that switched platforms within 6 months | 5 of 14 (36%) |

| Primary reason for platform switch | Cost escalation at scale (Zapier → Make in all 5 cases) |

The most significant finding: Organizations that documented their manual workflow before selecting a platform achieved ROI an average of 4.1 weeks faster than those who selected a platform first and then designed workflows around it. The order of operations matters more than the tool selection.

Secondary finding: The two organizations using n8n reported the lowest monthly costs ($0–$12 for self-hosting infrastructure) but the highest setup investment: an average of 34 hours of technical time before the first production workflow went live. n8n is not a tool for non-technical teams — it is a tool for organizations that can absorb that upfront cost and want long-term infrastructure control.

The Automation Readiness Framework: Before You Touch Any Tool

Most automation guides start with tool comparisons. This one does not — because selecting a tool before you are ready to use it correctly is the primary cause of wasted automation spend.

Run through this framework before evaluating any platform.

Step 1: Process Inventory

List every repetitive task in your business that happens more than twice per week. Be specific — not “handle leads” but “receive lead from Facebook form → copy name, email, phone to spreadsheet → send WhatsApp message → add reminder to Notion.”

The specificity matters because automation tools operate on exact data — they do not interpret vague processes.

Step 2: Stability Check

For each process on your list, ask: has this process run the same way for at least 4 weeks without modification?

If the answer is no, do not automate it yet. Automating an unstable process does not fix it — it locks in the instability and makes it harder to change later.

This is the single most violated rule in business automation.

Step 3: Volume & Frequency Assessment

| Task Frequency | Automation Priority |

|---|---|

| 10+ times per day | Automate immediately — high ROI |

| Daily (1–10 times) | High priority |

| Several times per week | Medium priority |

| Weekly | Evaluate carefully — manual may be faster |

| Monthly or less | Do not automate |

Step 4: Error Cost Assessment

What happens when this process fails or produces an error? If the answer is “a customer gets a wrong email” — automate with appropriate error handling. If the answer is “a financial transaction posts incorrectly” — validate extensively before automating and maintain a manual fallback.

Step 5: Readiness Score

Before selecting a platform, your highest-priority automation candidates should meet all of the following:

- ✅ Process is fully documented step by step

- ✅ Process has run consistently for at least 4 weeks without changes

- ✅ You can describe every data input and output precisely

- ✅ You know what should happen when the process encounters an error

- ✅ Someone on your team owns this process and will be responsible for the automation

If you cannot check all five boxes, invest one more week in process documentation before touching any automation tool.

The Three Platforms Explained: Zapier, Make, and n8n

These three platforms are not interchangeable. They represent three fundamentally different philosophies about how automation should work — and who should be able to use it.

Zapier: The Accessible Standard

Philosophy: Automation should be accessible to anyone, regardless of technical background.

How it works: Zapier connects apps through a linear trigger-action model. You define a trigger (something that happens) and one or more actions (what happens as a result). The interface is clean, guided, and forgiving.

Pricing model: Per task. Every action step in every workflow consumes a task from your monthly quota. This simplicity comes at a cost: as workflows grow more complex, costs escalate proportionally.

Best for: Teams with no technical capacity, low-to-medium volume workflows, fast deployment requirements, and organizations in the early stages of automation adoption.

Honest limitation: Zapier’s pricing architecture penalizes complexity. As your business grows and workflows become more sophisticated, the cost curve steepens in a way that is difficult to predict and expensive to reverse.

Make: The Operator’s Platform

Philosophy: Automation should be powerful enough to handle real business complexity without requiring full development resources.

How it works: Make uses a visual canvas-based interface where you build scenarios — workflows that can branch conditionally, iterate over data sets, and handle errors gracefully without multiplying costs for each branch. The visual interface looks more complex than Zapier at first, but it reflects the actual complexity of the process you are automating.

Pricing model: Per operation, with a more efficient counting structure than Zapier. Conditional branches that do not execute do not consume operations. This makes Make significantly more cost-efficient at scale.

Best for: Digital agencies, operations-heavy SMEs, teams managing multiple clients or departments, and anyone who has outgrown Zapier’s cost structure.

Honest limitation: Make has a genuine learning curve. Non-technical users typically need 6–10 hours of investment before they are comfortable building and debugging scenarios independently.

n8n: The Infrastructure Play

Philosophy: Automation infrastructure should be owned, not rented.

How it works: n8n is an open-source workflow automation platform that can be self-hosted on your own server infrastructure. The visual editor is similar in concept to Make but with full code access at every node — meaning technically capable users can extend n8n beyond what any SaaS platform allows.

Pricing model: Free if self-hosted (you pay only for server costs, typically $5–$20/month on a basic VPS). A cloud-hosted version is also available starting at approximately $20/month.

Best for: Technical teams or organizations with a developer resource, high-volume operations where SaaS platform costs become prohibitive, and businesses that require full data sovereignty (healthcare, legal, finance).

Honest limitation: n8n is not appropriate for non-technical teams. Initial setup requires server configuration, and ongoing maintenance requires someone comfortable with infrastructure. Based on our research data, initial technical investment averages 34 hours before the first production workflow is live.

How to Choose the Right Platform for Your Stage

Do not choose a platform based on which one has the best marketing or the longest list of integrations. Choose based on where your business is right now and where it will be in 12 months.

The Stage-Based Selection Model

Stage 1 — Automation Beginner (0–3,000 tasks/month) Recommended: Zapier You need to learn what automation can do before optimizing how it does it. Zapier’s simplicity accelerates that learning. The cost premium at this volume is acceptable given the productivity and learning gains.

Stage 2 — Automation Operator (3,000–15,000 tasks/month) Recommended: Evaluate Make seriously This is the transition zone where Zapier costs become material and Make’s efficiency advantage begins to compound. Most organizations in our research switched at this stage — the ones who waited longer spent significantly more before switching.

Stage 3 — Automation at Scale (15,000+ tasks/month) Recommended: Make or n8n At this volume, Make almost always produces better financial outcomes than Zapier. n8n becomes viable if your team has technical capacity and you want full infrastructure control.

Stage 4 — Automation as Infrastructure (50,000+ tasks/month or data sovereignty requirements) Recommended: n8n (self-hosted) At this scale, SaaS platform costs become a meaningful operational expense. n8n’s self-hosted model converts variable SaaS cost into fixed infrastructure cost — which is significantly more manageable at high volume.

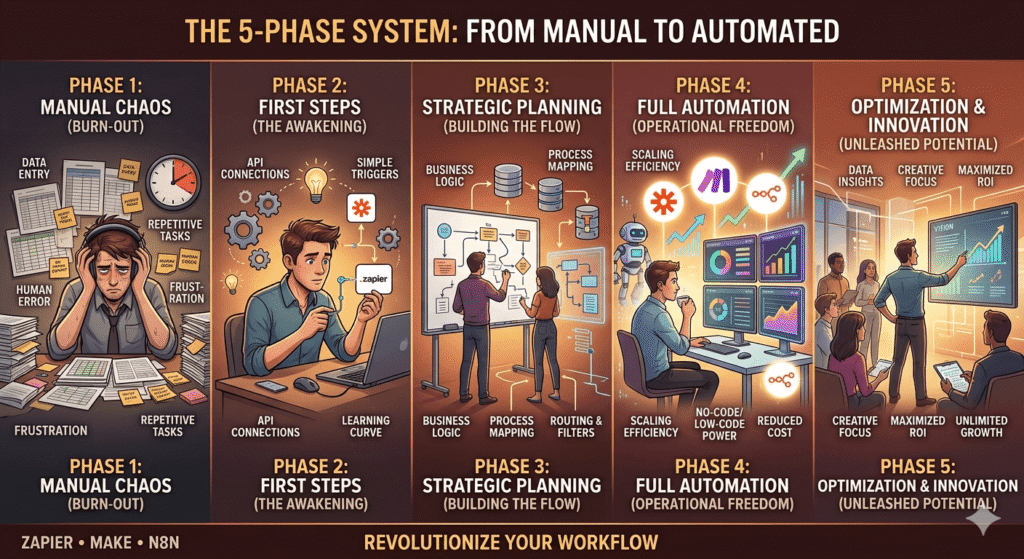

The 5-Phase System: From Manual to Automated

This is the implementation system we used across all 14 organizations in our research. The 4 that achieved the fastest ROI followed this sequence precisely. The 5 that switched platforms mid-implementation had skipped Phase 1 or Phase 2.

Phase 1: Document (Week 1–2)

Map your highest-priority manual process in writing before touching any tool. The format is not important — a Google Doc, a flowchart, or a whiteboard photo all work. What matters is capturing:

- Every input: what data triggers this process? Where does it come from?

- Every step: what happens in sequence?

- Every output: what is produced? Where does it go?

- Every exception: what happens when something is missing or wrong?

If you cannot document the exceptions, you are not ready to automate.

Phase 2: Validate Manually (Week 2–3)

Run the documented process manually for at least 5–10 complete cycles while following your documentation exactly. This is not redundant — it almost always reveals steps you missed, data inconsistencies you did not anticipate, and exceptions you had not considered.

Processes that survive Phase 2 without modification are ready to automate. Processes that require changes during Phase 2 need another cycle before moving forward.

Phase 3: Build Minimum Viable Automation (Week 3–4)

Build the simplest possible version of the automation that handles the 80% case — the most common, clean scenario. Do not build error handling, edge cases, or advanced branching in the first version. Get the core workflow running correctly first.

Test with real data — not dummy data. Real data surfaces real problems.

Phase 4: Harden (Week 5–6)

Add error handling, notifications for failures, and edge case logic once the core workflow is stable. This is also when you add logging — a mechanism to record what the automation did and when, so you can audit it if something goes wrong downstream.

Phase 5: Monitor and Audit (Ongoing)

Set a calendar reminder for the first of every month: spend 30 minutes reviewing all active automations. Check for errors, check for workflows that are no longer needed, and check whether the process the automation was built on has changed. Automation that is not monitored is not automation — it is unattended risk.

Real Case Study A: 4-Person Marketing Agency

Organization profile: A 4-person digital marketing agency managing 9 clients across social media management, paid advertising, and monthly reporting.

The problem before automation: The agency’s account managers spent an estimated 11–14 hours per week on manual administrative tasks: copying lead data from Facebook Lead Ads into a spreadsheet, sending onboarding emails manually, compiling weekly performance screenshots into client reports, and updating a shared Notion board that clients could access.

Platform chosen: Make (Core Plan) Monthly cost: $18.82 Implementation time: 3 weeks (including Phase 1 and 2) Technical resource required: None — the agency owner built all scenarios after approximately 8 hours of Make learning investment

Workflows automated:

- Facebook Lead Ads → Airtable CRM → Gmail onboarding sequence (3-email drip with delays)

- Weekly: pull ad performance data from Meta Ads API → format → push to client Notion dashboard

- Monthly: compile reporting data → generate Google Slides summary via API → email to client

- New client onboarding: form submission → create project folder structure in Google Drive → add to Notion → notify team in Slack

Results after 90 days:

| Metric | Before | After | Change |

|---|---|---|---|

| Weekly admin hours (total team) | 52 hours | 19 hours | -63% |

| Client onboarding time | 4.5 hours/client | 45 minutes/client | -83% |

| Monthly reporting time | 6 hours/client | 1.5 hours/client | -75% |

| Automation cost/month | $0 | $18.82 | New cost |

| Capacity to take new clients | Maxed at 9 | Comfortable at 12–13 | +33–44% |

The unexpected outcome: The agency did not immediately onboard more clients after automation. Instead, the freed time was redirected to strategy and creative work — which produced measurably better campaign results and client retention. The ROI was not just operational; it was qualitative.

The mistake made: In month 1, the weekly reporting automation failed silently for 3 clients because the Meta Ads API returned a slightly different data structure than expected. No error notifications were configured. The agency discovered the failure only when a client asked why their Notion dashboard had not been updated. This was resolved by adding error notifications in Phase 4 — but the lesson reinforced why monitoring and hardening are non-negotiable phases.

Real Case Study B: E-Commerce SME (Local Distribution)

Organization profile: A local distribution company with 18 employees, managing orders from multiple sales channels: a WooCommerce website, a WhatsApp Business account, and a manual phone order system.

The problem before automation: Orders from three channels arrived through different systems and needed to be manually reconciled into a single inventory management spreadsheet. This reconciliation happened twice daily and took approximately 3 hours per day — 6 person-hours total. Stock discrepancies caused by timing delays resulted in an estimated 12–15 overselling incidents per month, each requiring manual resolution with customers.

Platform chosen: Make (Pro Plan) combined with n8n (self-hosted) for the WhatsApp Business integration, which required custom webhook handling that Make’s native WhatsApp connector did not fully support.

Monthly infrastructure cost:

- Make Pro: $34.12

- VPS for n8n: $12/month (DigitalOcean basic droplet)

- Total: $46.12/month

Implementation time: 7 weeks (longer due to WhatsApp Business API setup complexity) Technical resource required: One part-time freelance developer for the n8n setup and WhatsApp webhook configuration (approximately 20 hours of paid technical work)

Workflows automated:

- WooCommerce order → real-time inventory deduction in Google Sheets → Slack notification to warehouse team

- WhatsApp Business order (via n8n webhook) → parse order data → same inventory pipeline as WooCommerce

- Phone orders: manual entry form → same pipeline

- Daily: inventory reconciliation report → email to operations manager

- Reorder alert: when SKU falls below threshold → automated purchase order draft in Gmail to supplier

Results after 90 days:

| Metric | Before | After | Change |

|---|---|---|---|

| Daily reconciliation time | 6 person-hours | 0.5 hours (review only) | -92% |

| Overselling incidents/month | 12–15 | 1–2 | -87% |

| Order processing speed | 45 min average | 8 min average | -82% |

| Automation cost/month | $0 | $46.12 | New cost |

| Estimated monthly cost of overselling resolution | ~$380* | ~$40 | -89% |

*Estimated based on staff time, customer compensation, and reshipment costs

The key insight from this case: The combination of Make and n8n was necessary because no single SaaS platform handled the WhatsApp Business webhook requirement natively at the required reliability level. Hybrid automation architectures — using more than one platform for different parts of the same system — are more common in real implementations than most guides acknowledge. The decision to add technical complexity must be justified by a specific capability gap, not just curiosity.

The Automation Cost Architecture: What It Actually Costs

Based on our 14-organization research and the two case studies above, here is what business automation realistically costs across different organizational stages:

| Organization Type | Platform | Monthly Platform Cost | One-time Setup Cost* | Time to ROI |

|---|---|---|---|---|

| Solopreneur | Zapier Starter or Make Free | $0–$29.99 | 4–8 hours (self) | 2–4 weeks |

| Small Agency (4–8 clients) | Make Core/Pro | $10.59–$34.12 | 15–25 hours (self) | 5–8 weeks |

| Growing Agency (10+ clients) | Make Pro/Teams | $34.12–$84 | 25–40 hours (self) | 6–10 weeks |

| SME (Operations-heavy) | Make Pro + n8n | $34–$60 | 30–50 hours (mixed) | 8–14 weeks |

| High-Volume SME | n8n (self-hosted) | $12–$20 (VPS only) | 30–60 hours (technical) | 10–16 weeks |

*One-time setup cost measured in team hours, not billed cost. Convert to dollar cost based on your own hourly rate.

The cost factor most organizations underestimate: Platform subscription cost is rarely the dominant cost in automation. The dominant cost is team time — time to document processes, build workflows, test, fix errors, and maintain. For most organizations in our research, the first 3 months of automation investment in team time far exceeded the platform subscription cost. The return on that investment compounds over months 4–12 and beyond.

The Most Common Automation Mistakes (and What They Cost)

These are the mistakes we observed most frequently across the 14 organizations — ranked by financial impact.

Mistake 1: Automating Before the Process Is Stable

How it happens: A team identifies a painful manual process and immediately builds an automation for it — before the process has settled into a consistent pattern.

What it costs: Every time the process changes, the automation breaks. Each fix requires debugging time. In two organizations from our research, this cycle — automate → break → fix → break → fix — consumed more time than the original manual process would have over the same period.

The fix: Never automate a process that has not run consistently for at least 4 weeks without modification.

Mistake 2: No Error Notifications

How it happens: The automation works during testing, so error handling is deprioritized.

What it costs: Silent failures. The automation stops working, nobody knows, and the downstream consequences compound before anyone notices. In Case Study A above, this manifested as 3 clients with outdated dashboards — a reputational cost that is difficult to quantify but very real.

The fix: Every production automation should have at minimum: a notification (email or Slack) when it fails, and a log of what ran and when.

Mistake 3: Choosing Zapier Without a Growth Plan

How it happens: A team starts with Zapier (fast, easy, intuitive), builds 15–20 workflows, then watches their monthly cost climb to $300–$500 as the business grows — without a plan to migrate.

What it costs: Beyond the direct cost escalation, migration from Zapier to Make at scale is a significant project: every workflow must be rebuilt from scratch. Organizations that wait until cost pressure becomes acute typically spend 40–80 hours on migration while simultaneously running two parallel systems.

The fix: If your business is growing and automation will be a core operational layer, evaluate Make from the start — even if you begin on Zapier. Build with migration in mind.

Mistake 4: Over-Engineering the First Workflow

How it happens: A technically capable team member builds the first workflow with every possible edge case, conditional branch, and error scenario accounted for — before the core workflow has been validated.

What it costs: Setup time that is 3–5x longer than necessary, and an automation so complex that nobody else on the team can maintain it when the builder is unavailable.

The fix: Build the minimum viable automation first. Add complexity only after the core is stable and validated with real data.

Mistake 5: No Monthly Audit

How it happens: Automations are built, validated, and then never reviewed again.

What it costs: Zombie workflows consuming quota. Broken automations nobody notices. Processes that have changed in practice but remain frozen in an outdated automation. In our research, the average organization had 3–5 workflows that were either no longer needed or no longer accurate after 6 months of operation — all still consuming platform resources.

The fix: Schedule a 30-minute automation audit on the first business day of every month.

Your 30-Day Automation Roadmap

If you are starting from zero or restarting after a failed first attempt, here is a concrete 30-day roadmap based on what worked across our research organizations.

Week 1: Audit and Document

- Day 1–2: List every repetitive task that happens more than twice per week

- Day 3–4: Apply the Stability Check — cross off anything that has changed in the last 4 weeks

- Day 5: Score remaining tasks by volume and error cost, select the top 1–2 to automate first

- Day 7: Complete full written documentation for your top-priority process including all exceptions

Week 2: Validate and Select

- Day 8–10: Run the documented process manually exactly as documented, 5–10 times

- Day 11: Select your platform (use the Stage-Based Selection Model from Section 5)

- Day 12–14: Complete platform onboarding and build one test workflow using non-production data

Week 3: Build and Test

- Day 15–18: Build the minimum viable version of your top-priority automation

- Day 19–20: Test with real data — 10 complete cycles minimum

- Day 21: Fix errors found during testing

Week 4: Harden and Launch

- Day 22–23: Add error notifications and basic logging

- Day 24: Final test — 5 more real-data cycles

- Day 25: Launch to production

- Day 28: First review — check error logs, verify outputs are correct

- Day 30: Document what you learned and identify the next workflow candidate

After 30 days: You have one production automation running, validated, monitored, and documented. That is the foundation. Build from there — one workflow at a time.

Frequently Asked Questions

Do I need a developer to automate my business? For Zapier and Make: no. Both platforms are designed for non-technical users, and the case studies in this article were implemented without developer involvement. For n8n (self-hosted): yes, at minimum you need someone comfortable with server configuration and basic troubleshooting. Cloud-hosted n8n reduces this requirement but does not eliminate it entirely.

How long before automation pays for itself? Based on our research across 14 organizations: an average of 6.3 weeks from the first production workflow going live. Organizations that skipped the documentation and validation phases took significantly longer — some never achieved positive ROI on their first automation attempt.

Can I automate WhatsApp Business messages? Yes, but it requires the official WhatsApp Business API — not the consumer WhatsApp app. This requires business verification through Meta and typically takes 1–3 weeks to set up. Both Make and n8n support WhatsApp Business API integration. Zapier’s support is more limited. Expect additional setup complexity compared to standard email or CRM integrations.

What is the biggest risk of business automation? Silent failure — automations that stop working without anyone knowing. The mitigation is straightforward: configure error notifications for every production workflow and review error logs monthly. The risk is not the automation itself; it is the absence of monitoring.

Should I automate everything I can? No. Automation has a meaningful setup and maintenance cost. Processes that happen monthly or less, processes that are still evolving, and processes with extremely high error-sensitivity should often remain manual or be the last to automate. Focus on high-frequency, stable, well-documented processes first.

What happens to my automations if a platform shuts down or changes pricing? This is a legitimate concern and part of why some organizations choose n8n’s self-hosted model. For SaaS platforms (Zapier, Make), your automations exist on their infrastructure — if pricing changes materially or the platform is discontinued, you would need to migrate. Maintaining documentation of every workflow (what it does, what data it uses, what it produces) is the most practical mitigation: good documentation makes migration possible regardless of platform.

Conclusion: The System Is the Asset

The automation tools: Zapier, Make, n8n are not the asset. They are the infrastructure.

The asset is the documented, validated, monitored system of workflows that runs your repetitive operations reliably while your team focuses on the work that actually requires human judgment.

That system does not emerge from choosing the right tool. It emerges from the discipline of documenting before automating, validating before deploying, and auditing consistently after launch.

The businesses in our research that achieved the strongest ROI from automation were not the ones that deployed the most workflows or used the most sophisticated tools. They were the ones that treated automation as an operational discipline rather than a technology project.

Start with one process. Document it completely. Validate it manually. Build the minimum viable automation. Monitor it rigorously. Then build the next one.

That sequence, repeated consistently, is how manual operations become scalable systems.

This article was last updated in April 2026. Platform pricing, feature availability, and API capabilities are subject to change. Always verify current specifications directly with Zapier, Make, and n8n before making implementation decisions.

Related articles: